AI technology is being used by cyber criminals to pioneer its malicious use and drive next level cyber attacks.

Following the proliferation data breaches, a lot of businesses are realizing that they cannot detect breach attempts today without the use of AI technologies. AI is changing the game for cybersecurity, and helping to speed up response times and augment under-resourced security operations. But despite the many positive possibilities of AI, it’s not hard to imagine the criminal possibilities as well.

Imagine receiving a phone call from your CEO who travelled abroad, asking you to make an urgent cash transfer to a foreign firm. But in reality, it’s not your CEO. The voice on the other end of the phone call just sounds deceptively like him. It is actually a computer-synthesized voice, an AI-based software that has been created to make it possible for someone to impersonate via the telephone. Such a situation used to be described as the future of cybercrime, but now that much talked about future is right here with us.

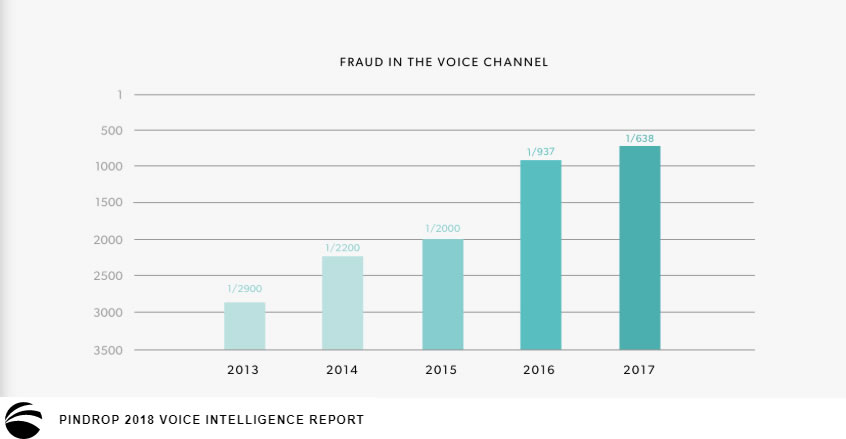

The Wall Street Journal recently published what is considered to be the first known successful use of AI-generated voice in cybercrime. A scammer used an AI-generated voice of the CEO of a German firm to convince the CEO of the UK branch office to transfer the sum of 243,000USD to a Hungarian supplier. The UK CEO thought he had gotten a call from the CEO of his company’s parent company in Germany. According to the report, the AI-generated call accurately mimicked the voice and German accent of the impersonated CEO enough to get the UK CEO to recognize it as his boss’s voice. The rise in AI-generated voice allows anyone to produce surprisingly realistic-sounding speech with the voice of any individual, completely indistinguishable from their real voice. In a report, Pindrop reveals the rate of voice fraud climbed over 350 percent from 2013 through 2017, with no signs of slowing down.

Malicious chatbots are also frequently used to fill online chat rooms with spam and ads, by mimicking human behavior and conversations. Chatbots can now be exploited by hackers to deceive unsuspecting victims into revealing sensitive information, such as passwords and bank account details. Malicious chatbots look and act just like the regular chatbots you find on websites: They’ll appear in a small pop-up window in the corner of a webpage, and ask visitors and customers if they need any help, and will use machine learning and natural language processing to respond intelligently. But rather than provide genuinely useful information or assistance, they’ll trick you into revealing sensitive data, which will later be used against you. Ticketmaster (US ticket sales and distribution company) last year admitted that a chatbot it used for customer service had been infected by malware, which gathered customers’ personal information and sent it to an unknown third party.

Of all the fears humanity have towards artificial intelligence, we have somehow focused on how AI will take our jobs and relegate us to the “useless class”. However, one threat is already here, AI generated voice and chatbots are yet another hook in the phisher’s rod. They can be easily deployed to augment traditional phishing attacks by adding another layer of credibility and deception. Fraudsters are increasingly leveraging techniques like imitation, replay attack, voice modification software and voice synthesis, often with great success.

Just as you learned to be wary of suspicious emails that ask for your sensitive data, you should likewise learn to be wary of voice calls and chatbots that ask for sensitive personal information. The best way to avoid being exploited is to verify the authenticity of requests to send money, via an alternative or offline channel. Nonetheless, the responsibility for guarding against malicious bots lies primarily with websites owners and chatbot creators. Security must be inherent in solutions that enhance the customer experience; well, in a way that does not undermine the experience. Any data flowing through the chatbot system should be encrypted at rest and in transit, especially if the bot is used for sensitive data.